TheatreBot

TheatreBot

| |

| Short Description: | Aim of this project is to produce autonomous robots able to play on stage together with human actors, possibly improvising, or in any case facing the casualities occurring on the scene. |

| Coordinator: | AndreaBonarini (andrea.bonarini@polimi.it) |

| Tutor: | AndreaBonarini (andrea.bonarini@polimi.it) |

| Collaborator: | |

| Students: | JulianMauricioAngelFernandez (julianmauricio.angel@polimi.it) |

| Research Area: | Robotics |

| Research Topic: | Robot development |

| Start: | 2013/01/12 |

| End: | 2016/12/31 |

| Status: | Closed |

| Level: | PhD |

| Type: | Thesis |

Human social interactions are based on the correct response to social situations. If someone does not respond in an expected way, he/she is margined by the others. Thus, robots that interact with humans in everyday life places, such as home, office, classroom and public spaces, should not only accomplish their task, but also be accepted by humans, which means that they feel comfortable to interact with robots. As a consequence, social robots must have the capacity to show emotions and behave in a socially correct way. However, building robots that could accomplish their tasks and show emotion is not an easy job due to the difficulty to select the correct emotion, show the emotion in a way that could be understandable by humans, together with all the traditional problems to perform a given task. This makes crucial to find a real environment that allows focusing the research efforts on the production of effective social and emotional interaction, without the need for other abilities (e.g., emotion detection, status detection, person recognition, etc.). Several researches have suggested that theatre could be an excellent place to test social and emotional abilities [4]-[8], due to theatre constraints that make the actor know what to say, how to react, where is expected to be the objects and other actors. All of this information is given before hand in a script. However, the few works [9]-[19] that have been put robots on stage in the last decade have used theatre as an environment to create robots for entertainment, without worrying about the use theatre actor’s training theories to make the robot project emotions to the audience. TheatreBot aims at exploiting theatre constraints to build a robotic platform and software that allow the robot to be an actor in theatre and not just as prop, as currently is happening. The system and platform will be designed to allow extension to other application areas where showing emotions are important as in robot games and assistive robots. To accomplish this goal, the robot will use a relational social and social model of the world to represent its character’s feelings and belief about the world. Besides, the concept of emotional state is used to add emotional features to action performance, so obtaining a full range of possibilities to show emotional and social interaction.

Contents

Why Theatre?

Theatre is considered as lively art [1]. Thanks to theatre characteristics, constraints and actors’ lessons, it is an excellent place to test coordination and expressiveness in robots, and actors training systems [1]–[3] can inspire the development of expressive robots. People are used to think at theatre as a repetitive show, and essential points that make theatre a lively art are often forgotten[1]:

- During a theatre performance actors do not have a second chance to perform in front of the same audience. If an actor fails remembering a line, or he/she does not show a believable character, the audience are going to get a bad impression of the play.

- Each performance is unique. No matter how much effort actors do to repeat each time the same performance, subtle changes could be seen: actors' and objects' stage position, actors' mood and, more importantly, audience's attitude.

- Audience's attitude affects actors. Actor could hear laughs, coughs, silence, and could even feel the tension in the audience. This could eager or discourage actors, affecting the whole performance.

- The performance outcome does not rely on one person. Good outcome comes from correct collaboration and coordination of playwriter, director, technical people and performers. In the specific performers case, they must work as a unity and show to the public a coherent story.

Theatre is an excellent framework to focus on specific abilities and features to produce effective social and emotional interaction, without the need for other abilities (e.g., emotion detection, status detection, person recognition, etc.), which are provided by the script and the director:

- The play script contains all the necessary information: actions, coordination cues, dialogues, and characters attitude.

- Since the script is known before any representation, rehearsals can be done to get used with objects' and performers' positions.

- The stage space is discretized to facilitate directors to give instructions, and actors to remember their positions.

- Actors should basically take one out of eight preset orientations during a performance.

Software Architecture

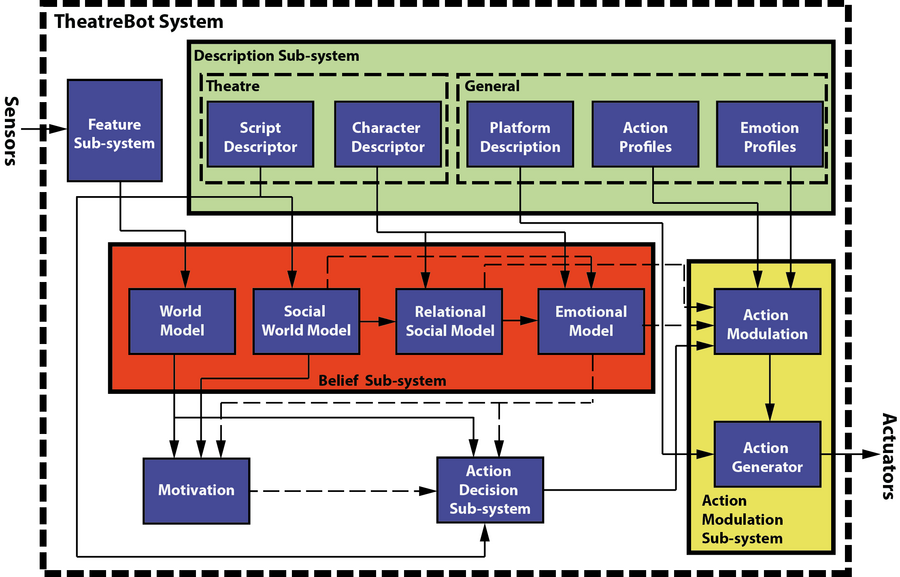

Roughly, the software architecture is compound by the following sub-systems:

- Belief

- Action Decision

- Action Modulation

- Description

- Motivation

The following image shows the proposed architecture:

As could be seen there are two kinds of lines: continues, which represents information that are used for others modules. On the other hand the dash means that the information is not mandatory, thus the other module could used or not that information. If none of the modules accept any suggestion of others modules, then the system could be seen as traditional action decision system without any kind of emotion addition.

Papers

Conferences

- Studying People's Emotional Responses to Robot's Movements. Julián M. Angel F. and Andrea Bonarini. 2014. In 3rd International Symposium on New Frontiers in Human-Robot Interaction at AISB'14, London. Link

- Towards an Autonomous Theatrical Robot. Julián M. Angel F. and Andrea Bonarini. 2013. Affective Computing and Intelligent Interaction (ACII) 2013. IEEE COMPUTER SOC, p. 689-694 p. link

- TheatreBot: A Software Architecture for a Theatrical Robot. Julián M. Angel F. and Andrea Bonarini. 2013. Taros 2013.

Videos

First Platform to test How to Show Emotions

RoboAct: Simple platform showing emotion I

RoboAct: Simple platform showing emotion II

RoboAct: Simple platform showing emotion III

Robotic Platform

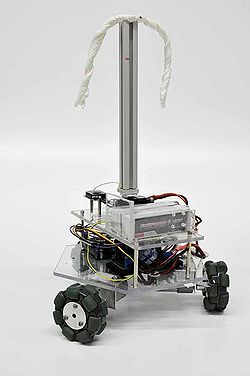

A robotic platform has been developed to not have human-like appearance. This platform has suffered different changes through its development. The first version was built using an Arduino Mega, three metal gear motors, and one servo motor attached to a beam, as it is showed in the following image:

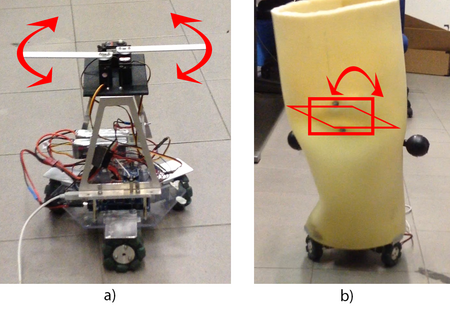

To the second version, it was added two additional servos. The three servos were used to change the shape of the robot:

It was added an Odroid U3, in which was installed ROS. The communication between Odroid and Arduino was done through ROS Serial.

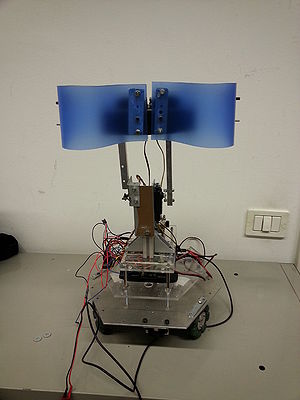

New modifications of the upper part were done to improve the emotion projection. These modifications were to remove of the second level, where all the motors were supported. Instead, it was added a motor to move forward and backward the whole level. Also, two plastic parts were used to maintain the upper shape.

Connecting with the platform

The platform now has a wifi usb that could be configured to connect to the network. [TODO] explain how to set the network changing network file. From a computer you can have ethernet connection and wifi communication with the platform following the steps from this webpage

References

- [1] E. Wilson and A. Goldfarb, Theatre: The Lively Art. McGraw-Hill Education, 2009.

- [2] J. Cavanaugh, Acting Means Doing !!: Here Are All the Techniques You Need, Carrying You Confidently from Auditions Through Rehearsals - Blocking, *Characterization - Into Performances, All the Way to Curtain Calls. CreateSpace, 2012.

- [3] S. Genevieve, Delsarte System of Dramatic Expression. BiblioBazaar, 2009.

- [4] G. Hoffman, “On stage: Robots as performers,” RSS 2011 Workshop on Human-Robot Interaction: Perspectives and Contributions to Robotics from the Human Sciences, 2009.

- [5] C. Breazeal, A. Brooks, J. Gray, M. Hancher, C. Kidd, J. McBean, D. Stiehl, and J. Strickon, “Interactive robot theatre,” in Intelligent Robots and Systems, 2003. (IROS 2003). Proceedings. 2003 IEEE/RSJ International Conference on, vol. 4, 2003, pp. 3648–3655 vol.3.

- [6] C.-Y. Lin, L.-C. Cheng, C.-C. Huang, L.-W. Chuang, W.-C. Teng, C.- H. Kuo, H.-Y. Gu, K.-L. Chung, and C.-S. Fahn, “Versatile humanoid robots for theatrical performances,” International Journal of Advanced Robotic Systems, 2013.

- [7] D. V. Lu and W. D. Smart, “Human-robot interactions as theatre,” in RO-MAN 2011. IEEE, 2011, pp. 473–478.

- [8] C. Pinhanez, “Computer theater,” in Proc. of the Eighth International Symposium on Electronic Arts (ISEA’97), 1997.

- [9] C.-Y. Lin, L.-C. Cheng, C.-C. Huang, L.-W. Chuang, W.-C. Teng, C.- H. Kuo, H.-Y. Gu, K.-L. Chung, and C.-S. Fahn, “Versatile humanoid robots for theatrical performances,” International Journal of Advanced Robotic Systems, 2013.

- [10] H. Knight, S. Satkin, V. Ramakrishna, and S. Divvala, “A savvy robot standup comic: Online learning through audience tracking,” TEI 2011, January 2011.

- [11] H. Knight, “Heather knight: la comedia de silicio,” TED Ideas Worth Spreading, December 2010. [Online]. Available: http://www.ted.com/ talks/heather knight silicon based comedy.html

- [12] K. R. Wurst, “I comici roboti: Performing the lazzo of the statue from the commedia dell’arte,” in AAAI Mobile Robot Competition, 2002, pp. 124–128.

- [13] A. Bruce, J. Knight, and I. R. Nourbakhsh, “Robot improv: Using drama to create believable agents,” in In AAAI Workshop Technical Report WS- 99-15 of the 8th Mobile Robot Competition and Exhibition. AAAI Press, Menlo, 2000, pp. 27–33.

- [14] LAAS-CNRS, “Roboscopie, the robot takes the stage!” Internet. [Online]. Available: http://www.openrobots.org/wiki/roboscopie

- [15] S. Lemaignan, M. Gharbi, J. Mainprice, M. Herrb, and R. Alami, “Roboscopie: a theatre performance for a human and a robot,” in

Proceedings of the seventh annual ACM/IEEE international conference on Human-Robot Interaction, ser. HRI ’12. New York, NY, USA: ACM, 2012, pp. 427–428.

- [16] C.-Y. Lin, C.-K. Tseng, W.-C. Teng, W. chen Lee, C.-H. Kuo, H.-Y. Gu, K.-L. Chung, and C.-S. Fahn, “The realization of robot theater: Humanoid

robots and theatric performance,” in International Conference on Advanced Robotics, 2009. ICAR 2009., 2009.

- [17] G. Hoffman, R. Kubat, and C. Breazeal, “A hybrid control system for puppeteering a live robotic stage actor,” in RO-MAN 2008, M. Buss and

K. K¨uhnlenz, Eds. IEEE, 2008, pp. 354–359.

- [18] Z. Par´e, “Robot drama research: from identification to synchronization,” in Proceedings of the 4th international conference on Social Robotics, ser. ICSR’12. Berlin, Heidelberg: Springer-Verlag, 2012, pp. 308–316.

- [19] I. Torres, “Robots share the stage with human actors in osaka university’s ’robot theater project’,” Japandaily Press, February 2013.